This is a new record for me. Ever since I started writing entries in my blog, way back in 2012, I have never managed to write something every day, let alone for 30 of them. I think the closest I came was in early 2015 when I started to transition from teaching to IT, and had more time, and that’s 6 years ago!

So why did I do it?

Well, firstly, I now have more time and crucially, time to be more creative. But it’s more than that - I do really enjoy sharing things I know or find out, and hope there are people out there who appreciate it. Lastly, I wondered whether I could maintain the daily writing and clearly, I can, at least for this period.

Now, I’m going to continue, of course, but at a slower cadence. I have some other projects I want to work on and am excited about, but before I do, I wanted to share some tips for anyone that wants to do something similar with their blogs.

Let’s begin!

1. Organisation

The number one tip I have is to be organised because that takes away some of the friction of writing. In my case, that manifests itself in a number of ways:

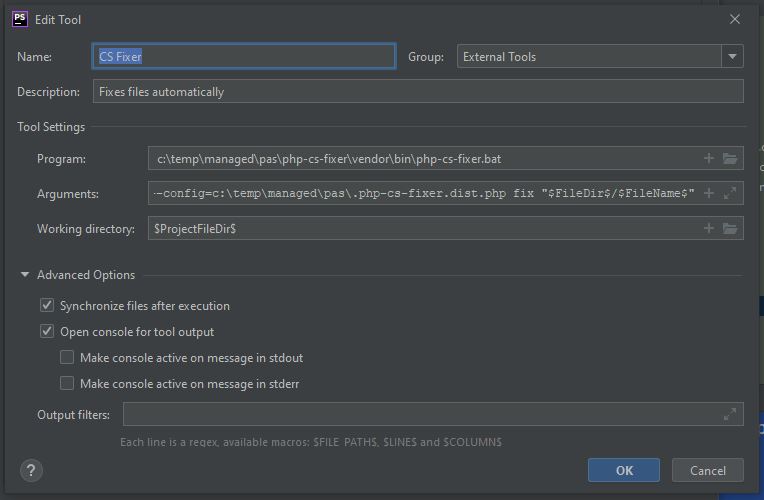

- All my blog posts are templated. There is a header, a main image, and a footer, before I even type a single word. That saves me some time from the get-go.

- I take all of my banner images from one place - Pixabay. Free, easy, and I just make sure I attribute them to the creator.

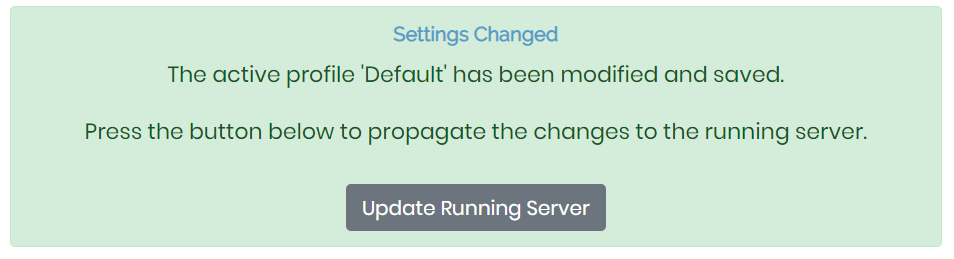

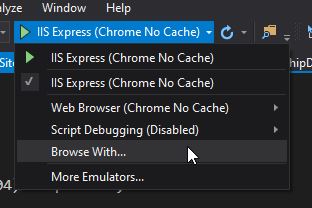

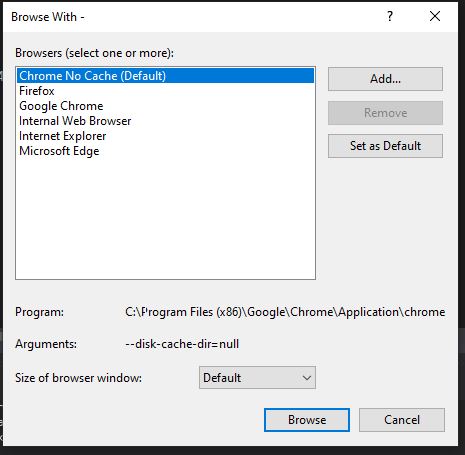

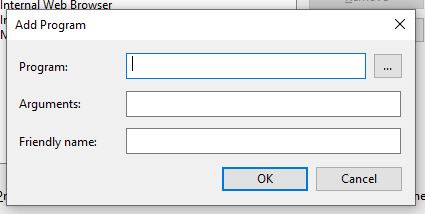

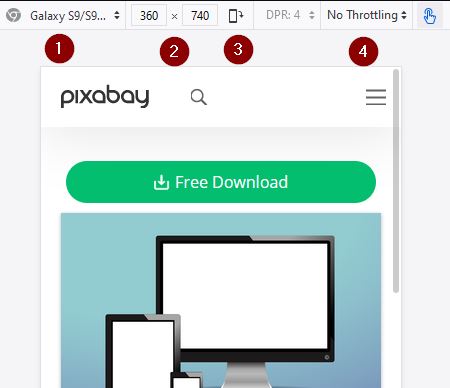

- I have scripts which present my blog, plus it is designed so that when I make any changes, they are reflected in the web browser immediately. That really helps and means I can concentrate on the writing.

- The general layout and points I want to make before I come to actually write the blog post are usually typed up in advance. This ties into my ideas log section later, but is a huge time saver; I don’t need to think too hard about what I need to write because really, I am just fleshing out the details.

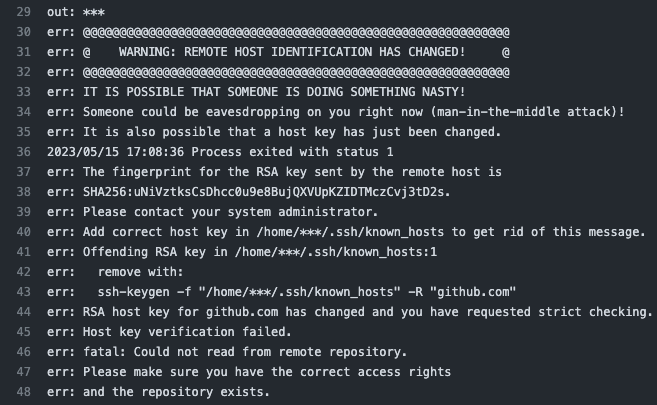

- I use GitHub to store my Markdown blog entries and once I push to the main branch, GitHub Actions take care of the publishing for me. Again - it reduces obstacles and makes it easier to publish something.

2. Look for Opportunities

Part of my role at work is to solve problems and as you can guess with IT, they can come in all sorts of flavours and areas. Consequently, I am constantly thinking about different things and how to solve them, so whilst I do that, I keep an eye out for topics I can write about. At my job, that manifests itself as process-oriented documentation, but at home, it turns into a blog post, sometimes.

Be curious! I am often wondering why, or how, which leads down all sorts of avenues that I didn’t expect. One quick look at my previous blog articles and you can see that I cover an awful lot of things and for me, that’s great food for my brain.

In addition, some of the things I write about also come from discussions with other people. They can know so much more than you in some facet of IT, so learn from them. If they mention something you don’t know about, study it yourself and then write about it.

Online media is another great source. I learn heaps from Twitter, other people’s blogs, articles etc. Keep consuming so that you can be a producer, too. As an example, on Product Hunt the other day, the number one voted item was a Captcha based on Doom. Really! That got me thinking about making my own one, perhaps with a game JS library. And if I make it, you can bet I am going to write about it, too.

3. Bank Blog Articles

My advice is that when the wind is in the right direction, take advantage of it. By that I mean if you can, write more than one blog article a day because you can bet life, circumstances or plain old tiredness will mean that some days you won’t want to write anything. Having an article or two that you can publish when you didn’t have any spare time will be a God send.

This isn’t as difficult as it sounds because some days you will find you have a little more time than you anticipated. Or, perhaps the blog article you wrote was quicker to do than usual? Maybe you are in the mood for writing more? Take advantage of these tail winds and type up an extra blog post, just in case.

4. Keep an Idea Log

It is really handy to keep a log of any ideas you have. You can use your phone, a notebook, scraps of paper even, but write those ideas down because otherwise, they can be fleeting. I’ve lost count of the fantastic (in my estimation, ahem) ideas I have had when I woke up, and then forgot all about, forever lost.

If you can, flesh them out a little with screenshots, notes, output, URLs, etc. too, because it will make it easier when you do come back to them or are suffering from writer’s (and idea’s) block.

5. Finally, General Tips

It might be tempting to just keep churning out the articles without reading them but that would be a mistake. It is very easy to make typos and errors so read over your blog articles a few times, and in a few different ways. For instance, I read the Markdown, but also like to see it on the webpage. In doing so, it tricks my brain into reading it more closely.

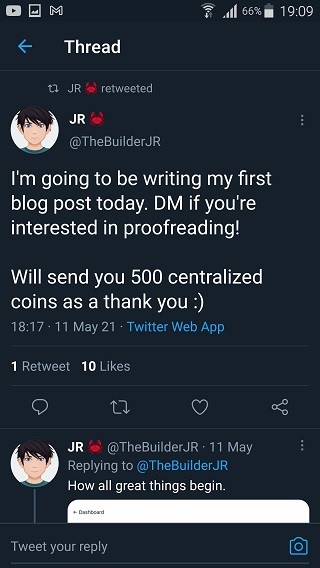

Another idea is to ask someone else to read it. Twitter is really good for this because there are lots of really kind people about that will chip in if you ask nicely.

I still make mistakes, but I catch an awful lot more and doing this helps me think through any bits that need to be added. It can also give me suggestions for other blog entries, especially where I know there is more to say on a particular topic.

You could perhaps feel that writing for 30 days may seem daunting, but it does get easier. You’re writing will hopefully improve and so will the speed at which you complete the blog posts, so hang in there till the end.

Also, consider breaking up bigger blog articles into parts. I did this with the small JavaScript program to convert weight and the bigger one on the Game of Life and Jest which I am still writing. Had I placed all those in one article, it would have been a chore to write and to read. For some of you, it might still be a chore to read, haha!

I’ve often read that if you are looking for regular readers, don’t do what I do which is write about all sorts! Targetted blogs seem to do better for building up a following, so aim for that if that is your goal.

Lastly, don’t worry about needing to know everything, either. I certainly am not an expert in anything, but I do want to learn and so do many others. I have also found many, many times, that things I thought were easy or that everyone knew, were much harder or unknown to others. Equally, it was the opposite way round from their perspective too. No-one can know everything and that’s probably for the best.

Well, that’s it. I hope that you found this useful and that it may encourage you to start writing. Send me the URL for your blog and you’ll get me reading it at least :-)

Hi! Did you find this useful or interesting? I have an email list coming soon, but in the meantime, if you ready anything you fancy chatting about, I would love to hear from you. You can contact me here or at stephen ‘at’ logicalmoon.com

]]>